what type of test should i use to compare a continuous data between three groups

Introduction

This folio shows how to perform a number of statistical tests using SPSS. Each department gives a brief description of the aim of the statistical test, when it is used, an example showing the SPSS commands and SPSS (often abbreviated) output with a brief interpretation of the output. You lot can run into the folio Choosing the Right Statistical Examination for a table that shows an overview of when each test is advisable to use. In deciding which test is appropriate to use, it is important to consider the type of variables that you accept (i.e., whether your variables are categorical, ordinal or interval and whether they are normally distributed), come across What is the difference betwixt categorical, ordinal and interval variables? for more information on this.

About the hsb information file

Virtually of the examples in this folio will use a data file called hsb2, loftier school and beyond. This data file contains 200 observations from a sample of high school students with demographic data most the students, such as their gender (female), socio-economic status (ses) and ethnic background (race). It likewise contains a number of scores on standardized tests, including tests of reading (read), writing (write), mathematics (math) and social studies (socst). You can get the hsb information file past clicking on hsb2.

One sample t-examination

A one sample t-exam allows us to test whether a sample mean (of a commonly distributed interval variable) significantly differs from a hypothesized value. For example, using the hsb2 data file, say we wish to test whether the boilerplate writing score (write) differs significantly from fifty. We tin practice this as shown below.

t-test /testval = l /variable = write.

The mean of the variable write for this particular sample of students is 52.775, which is statistically significantly unlike from the test value of 50. We would conclude that this group of students has a significantly college mean on the writing test than 50.

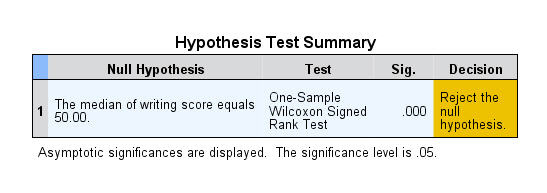

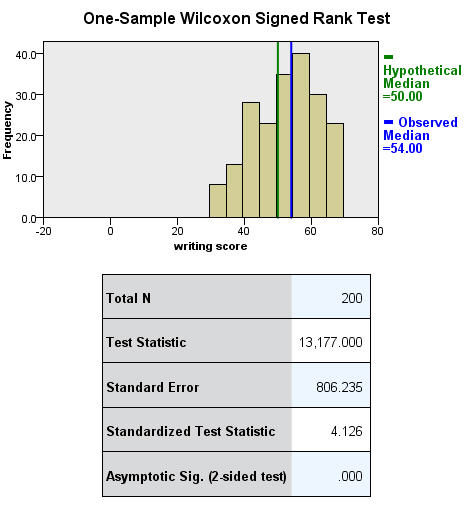

One sample median test

A one sample median test allows us to examination whether a sample median differs significantly from a hypothesized value. Nosotros will use the aforementioned variable, write, equally we did in the ane sample t-test example in a higher place, but we practice not need to assume that it is interval and normally distributed (nosotros only demand to presume that write is an ordinal variable).

nptests /onesample exam (write) wilcoxon(testvalue = 50).

Binomial exam

A one sample binomial test allows us to test whether the proportion of successes on a two-level categorical dependent variable significantly differs from a hypothesized value. For example, using the hsb2 data file, say we wish to test whether the proportion of females (female) differs significantly from 50%, i.e., from .v. We can do this equally shown below.

npar tests /binomial (.5) = female.

The results betoken that there is no statistically significant difference (p = .229). In other words, the proportion of females in this sample does not significantly differ from the hypothesized value of 50%.

Chi-square goodness of fit

A chi-foursquare goodness of fit test allows us to test whether the observed proportions for a chiselled variable differ from hypothesized proportions. For example, let's suppose that nosotros believe that the general population consists of 10% Hispanic, 10% Asian, 10% African American and 70% White folks. We want to test whether the observed proportions from our sample differ significantly from these hypothesized proportions.

npar exam /chisquare = race /expected = 10 10 10 70.

These results show that racial composition in our sample does not differ significantly from the hypothesized values that we supplied (chi-square with three degrees of liberty = five.029, p = .170).

2 contained samples t-test

An independent samples t-test is used when you want to compare the means of a normally distributed interval dependent variable for two independent groups. For case, using the hsb2 data file, say we wish to examination whether the hateful for write is the same for males and females.

t-test groups = female(0 one) /variables = write.

Because the standard deviations for the two groups are similar (10.iii and 8.1), we will use the "equal variances causeless" examination. The results betoken that there is a statistically significant divergence between the hateful writing score for males and females (t = -three.734, p = .000). In other words, females have a statistically significantly college mean score on writing (54.99) than males (fifty.12).

Run across also

- SPSS Learning Module: An overview of statistical tests in SPSS

Wilcoxon-Isle of man-Whitney exam

The Wilcoxon-Isle of man-Whitney test is a non-parametric analog to the independent samples t-exam and tin be used when you do not presume that the dependent variable is a ordinarily distributed interval variable (you but assume that the variable is at least ordinal). Yous will notice that the SPSS syntax for the Wilcoxon-Isle of mann-Whitney test is about identical to that of the independent samples t-test. We volition use the same data file (the hsb2 data file) and the aforementioned variables in this example every bit we did in the independent t-exam example above and will not presume that write, our dependent variable, is unremarkably distributed.

npar exam /thousand-w = write by female(0 ane).

The results propose that there is a statistically meaning departure between the underlying distributions of the write scores of males and the write scores of females (z = -3.329, p = 0.001).

See also

- FAQ: Why is the Mann-Whitney significant when the medians are equal?

Chi-square exam

A chi-square test is used when you want to come across if in that location is a relationship between 2 chiselled variables. In SPSS, the chisq option is used on the statistics subcommand of the crosstabs control to obtain the test statistic and its associated p-value. Using the hsb2 data file, let'southward see if there is a relationship between the type of schoolhouse attended (schtyp) and students' gender (female). Call back that the chi-square test assumes that the expected value for each cell is five or higher. This assumption is easily met in the examples below. However, if this assumption is not met in your data, please come across the section on Fisher's exact examination below.

crosstabs /tables = schtyp by female /statistic = chisq.

These results point that there is no statistically significant relationship betwixt the type of schoolhouse attended and gender (chi-foursquare with one degree of freedom = 0.047, p = 0.828).

Let's await at another example, this time looking at the linear human relationship betwixt gender (female) and socio-economic condition (ses). The point of this example is that one (or both) variables may have more than two levels, and that the variables do not have to have the same number of levels. In this example, female has two levels (male person and female) and ses has iii levels (depression, medium and high).

crosstabs /tables = female by ses /statistic = chisq.

Again nosotros find that at that place is no statistically significant relationship between the variables (chi-square with 2 degrees of freedom = four.577, p = 0.101).

See also

- SPSS Learning Module: An Overview of Statistical Tests in SPSS

Fisher's exact exam

The Fisher'due south exact examination is used when yous want to conduct a chi-square exam but 1 or more of your cells has an expected frequency of 5 or less. Recall that the chi-square test assumes that each cell has an expected frequency of five or more than, just the Fisher's exact test has no such assumption and can be used regardless of how small the expected frequency is. In SPSS unless you lot have the SPSS Exact Test Module, you tin only perform a Fisher'due south verbal test on a ii×two tabular array, and these results are presented by default. Please come across the results from the chi squared example above.

One-fashion ANOVA

A 1-style analysis of variance (ANOVA) is used when you have a categorical independent variable (with 2 or more than categories) and a normally distributed interval dependent variable and you wish to test for differences in the means of the dependent variable cleaved downward past the levels of the independent variable. For case, using the hsb2 data file, say we wish to test whether the mean of write differs between the three program types (prog). The command for this test would be:

oneway write past prog.

The mean of the dependent variable differs significantly among the levels of program blazon. However, we do not know if the difference is betwixt merely ii of the levels or all three of the levels. (The F test for the Model is the same equally the F test for prog because prog was the only variable entered into the model. If other variables had also been entered, the F test for the Model would have been different from prog.) To see the mean of write for each level of program type,

means tables = write past prog.

From this we can see that the students in the academic program have the highest hateful writing score, while students in the vocational program accept the everyman.

See also

- SPSS Textbook Examples: Design and Assay, Affiliate 7

- SPSS Textbook Examples: Applied Regression Assay, Chapter 8

- SPSS FAQ: How tin I practise ANOVA contrasts in SPSS?

- SPSS Library: Understanding and Interpreting Parameter Estimates in Regression and ANOVA

Kruskal Wallis test

The Kruskal Wallis test is used when yous have i independent variable with two or more than levels and an ordinal dependent variable. In other words, it is the non-parametric version of ANOVA and a generalized form of the Isle of mann-Whitney examination method since it permits two or more groups. We will use the same data file every bit the i way ANOVA example above (the hsb2 data file) and the aforementioned variables as in the case above, only we will not assume that write is a normally distributed interval variable.

npar tests /k-w = write by prog (one,3).

If some of the scores receive tied ranks, and then a correction cistron is used, yielding a slightly different value of chi-squared. With or without ties, the results bespeak that there is a statistically meaning difference amid the three type of programs.

Paired t-test

A paired (samples) t-exam is used when yous accept 2 related observations (i.e., two observations per subject) and you desire to encounter if the means on these two commonly distributed interval variables differ from ane another. For example, using the hsb2 data file we volition test whether the mean of read is equal to the mean of write.

t-examination pairs = read with write (paired).

These results signal that the mean of read is non statistically significantly different from the mean of write (t = -0.867, p = 0.387).

Wilcoxon signed rank sum test

The Wilcoxon signed rank sum test is the non-parametric version of a paired samples t-exam. You use the Wilcoxon signed rank sum test when you do not wish to assume that the difference between the two variables is interval and normally distributed (simply you lot do assume the divergence is ordinal). Nosotros will utilise the aforementioned example every bit above, just we will not assume that the deviation between read and write is interval and commonly distributed.

npar examination /wilcoxon = write with read (paired).

The results suggest that there is not a statistically significant deviation betwixt read and write.

If you believe the differences between read and write were not ordinal but could just exist classified as positive and negative, then you may want to consider a sign test in lieu of sign rank test. Over again, we will use the same variables in this example and assume that this departure is not ordinal.

npar test /sign = read with write (paired).

Nosotros conclude that no statistically pregnant departure was found (p=.556).

McNemar test

You would perform McNemar's exam if you were interested in the marginal frequencies of two binary outcomes. These binary outcomes may be the same outcome variable on matched pairs (similar a case-control study) or two issue variables from a single grouping. Continuing with the hsb2 dataset used in several in a higher place examples, let united states create two binary outcomes in our dataset: himath and hiread. These outcomes tin be considered in a two-way contingency table. The null hypothesis is that the proportion of students in the himath group is the same as the proportion of students in hiread group (i.e., that the contingency table is symmetric).

compute himath = (math>60). compute hiread = (read>60). execute. crosstabs /tables=himath BY hiread /statistic=mcnemar /cells=count.

McNemar'southward chi-foursquare statistic suggests that there is not a statistically significant difference in the proportion of students in the himath grouping and the proportion of students in the hiread group.

1-manner repeated measures ANOVA

You would perform a one-way repeated measures analysis of variance if you had one categorical independent variable and a ordinarily distributed interval dependent variable that was repeated at to the lowest degree twice for each subject. This is the equivalent of the paired samples t-test, simply allows for two or more levels of the categorical variable. This tests whether the mean of the dependent variable differs by the categorical variable. Nosotros take an instance data set chosen rb4wide, which is used in Kirk's book Experimental Design. In this data fix, y is the dependent variable, a is the repeated measure and southward is the variable that indicates the subject number.

glm y1 y2 y3 y4 /wsfactor a(4).

You lot will notice that this output gives four different p-values. The output labeled "sphericity assumed" is the p-value (0.000) that you would get if you assumed compound symmetry in the variance-covariance matrix. Because that assumption is often non valid, the three other p-values offer various corrections (the Huynh-Feldt, H-F, Greenhouse-Geisser, K-One thousand and Lower-leap). No matter which p-value yous use, our results betoken that we have a statistically pregnant event of a at the .05 level.

Meet also

- SPSS Textbook Examples from Design and Analysis: Chapter xvi

- SPSS Library: Advanced Bug in Using and Agreement SPSS MANOVA

- SPSS Code Fragment: Repeated Measures ANOVA

Repeated measures logistic regression

If you accept a binary consequence measured repeatedly for each bailiwick and you wish to run a logistic regression that accounts for the consequence of multiple measures from single subjects, you lot can perform a repeated measures logistic regression. In SPSS, this can be done using the GENLIN control and indicating binomial as the probability distribution and logit as the link function to be used in the model. The exercise data file contains 3 pulse measurements from each of xxx people assigned to 2 different diet regiments and three different do regiments. If we define a "high" pulse as being over 100, nosotros can then predict the probability of a high pulse using diet regiment.

GET FILE='C:mydatahttps://stats.idre.ucla.edu/wp-content/uploads/2016/02/do.sav'.GENLIN highpulse (REFERENCE=LAST) By diet (order = DESCENDING) /MODEL diet DISTRIBUTION=BINOMIAL LINK=LOGIT /REPEATED SUBJECT=id CORRTYPE = EXCHANGEABLE.

These results indicate that diet is not statistically pregnant (Wald Chi-Square = i.562, p = 0.211).

Factorial ANOVA

A factorial ANOVA has two or more categorical independent variables (either with or without the interactions) and a single normally distributed interval dependent variable. For example, using the hsb2 data file nosotros will look at writing scores (write) as the dependent variable and gender (female) and socio-economical status (ses) every bit independent variables, and we will include an interaction of female by ses. Note that in SPSS, you do not need to have the interaction term(s) in your data set. Rather, you can take SPSS create it/them temporarily by placing an asterisk between the variables that will make upward the interaction term(s).

glm write past female person ses.

These results bespeak that the overall model is statistically significant (F = v.666, p = 0.00). The variables female and ses are also statistically significant (F = 16.595, p = 0.000 and F = 6.611, p = 0.002, respectively). However, that interaction between female and ses is non statistically significant (F = 0.133, p = 0.875).

Encounter also

- SPSS Textbook Examples from Design and Analysis: Chapter 10

- SPSS FAQ: How can I do tests of simple main effects in SPSS?

- SPSS FAQ: How practice I plot ANOVA cell ways in SPSS?

- SPSS Library: An Overview of SPSS GLM

Friedman test

You lot perform a Friedman test when yous have one within-subjects independent variable with two or more levels and a dependent variable that is non interval and unremarkably distributed (only at to the lowest degree ordinal). We will utilise this test to determine if there is a divergence in the reading, writing and math scores. The zilch hypothesis in this test is that the distribution of the ranks of each type of score (i.e., reading, writing and math) are the same. To behave a Friedman examination, the data need to be in a long format. SPSS handles this for you, only in other statistical packages you will have to reshape the data before you can conduct this test.

npar tests /friedman = read write math.

Friedman's chi-square has a value of 0.645 and a p-value of 0.724 and is not statistically significant. Hence, there is no bear witness that the distributions of the three types of scores are different.

Ordered logistic regression

Ordered logistic regression is used when the dependent variable is ordered, simply not continuous. For example, using the hsb2 data file we will create an ordered variable chosen write3. This variable volition have the values 1, ii and three, indicating a low, medium or high writing score. We do not mostly recommend categorizing a continuous variable in this way; we are simply creating a variable to employ for this instance. We will use gender (female person), reading score (read) and social studies score (socst) as predictor variables in this model. We will use a logit link and on the impress subcommand nosotros take requested the parameter estimates, the (model) summary statistics and the test of the parallel lines supposition.

if write ge 30 and write le 48 write3 = 1. if write ge 49 and write le 57 write3 = 2. if write ge 58 and write le 70 write3 = 3. execute. plum write3 with female read socst /link = logit /print = parameter summary tparallel.

The results bespeak that the overall model is statistically significant (p < .000), as are each of the predictor variables (p < .000). In that location are two thresholds for this model considering at that place are 3 levels of the outcome variable. We too see that the exam of the proportional odds assumption is non-significant (p = .563). One of the assumptions underlying ordinal logistic (and ordinal probit) regression is that the relationship betwixt each pair of event groups is the aforementioned. In other words, ordinal logistic regression assumes that the coefficients that describe the relationship between, say, the lowest versus all higher categories of the response variable are the same as those that describe the relationship between the next lowest category and all higher categories, etc. This is called the proportional odds supposition or the parallel regression assumption. Because the relationship between all pairs of groups is the same, at that place is only 1 set of coefficients (only i model). If this was not the example, nosotros would demand dissimilar models (such every bit a generalized ordered logit model) to depict the relationship between each pair of outcome groups.

Encounter also

- SPSS Information Assay Examples: Ordered logistic regression

- SPSS Annotated Output: Ordinal Logistic Regression

Factorial logistic regression

A factorial logistic regression is used when you lot have two or more categorical independent variables but a dichotomous dependent variable. For case, using the hsb2 data file we will use female person equally our dependent variable, considering it is the just dichotomous variable in our information fix; certainly not because it common practice to utilize gender as an outcome variable. We volition employ type of program (prog) and school type (schtyp) as our predictor variables. Considering prog is a categorical variable (it has 3 levels), we need to create dummy codes for information technology. SPSS will practise this for you past making dummy codes for all variables listed after the keyword with. SPSS will also create the interaction term; merely list the 2 variables that will make upwards the interaction separated by the keyword past.

logistic regression female with prog schtyp prog by schtyp /dissimilarity(prog) = indicator(ane).

The results indicate that the overall model is non statistically significant (LR chi2 = 3.147, p = 0.677). Furthermore, none of the coefficients are statistically significant either. This shows that the overall effect of prog is non significant.

See also

- Annotated output for logistic regression

Correlation

A correlation is useful when y'all want to come across the relationship betwixt two (or more) usually distributed interval variables. For case, using the hsb2 information file we tin can run a correlation between two continuous variables, read and write.

correlations /variables = read write.

In the 2nd example, nosotros volition run a correlation between a dichotomous variable, female person, and a continuous variable, write. Although it is assumed that the variables are interval and commonly distributed, we can include dummy variables when performing correlations.

correlations /variables = female person write.

In the first example above, we meet that the correlation betwixt read and write is 0.597. By squaring the correlation then multiplying past 100, y'all can determine what percentage of the variability is shared. Let's round 0.597 to be 0.6, which when squared would be .36, multiplied by 100 would be 36%. Hence read shares nearly 36% of its variability with write. In the output for the 2nd instance, nosotros tin can see the correlation between write and female is 0.256. Squaring this number yields .065536, meaning that female shares approximately six.v% of its variability with write.

See also

- Annotated output for correlation

- SPSS Learning Module: An Overview of Statistical Tests in SPSS

- SPSS FAQ: How can I analyze my data by categories?

- Missing Data in SPSS

Unproblematic linear regression

Simple linear regression allows us to await at the linear relationship between 1 usually distributed interval predictor and 1 normally distributed interval outcome variable. For example, using the hsb2 information file, say we wish to expect at the relationship between writing scores (write) and reading scores (read); in other words, predicting write from read.

regression variables = write read /dependent = write /method = enter.

Nosotros run across that the relationship between write and read is positive (.552) and based on the t-value (10.47) and p-value (0.000), we would conclude this relationship is statistically meaning. Hence, we would say in that location is a statistically significant positive linear relationship between reading and writing.

See also

- Regression With SPSS: Chapter 1 – Simple and Multiple Regression

- Annotated output for regression

- SPSS Textbook Examples: Introduction to the Exercise of Statistics, Chapter ten

- SPSS Textbook Examples: Regression with Graphics, Chapter two

- SPSS Textbook Examples: Applied Regression Analysis, Chapter 5

Non-parametric correlation

A Spearman correlation is used when one or both of the variables are non assumed to be normally distributed and interval (just are assumed to exist ordinal). The values of the variables are converted in ranks and then correlated. In our instance, we volition look for a relationship between read and write. Nosotros will non presume that both of these variables are normal and interval.

nonpar corr /variables = read write /print = spearman.

The results propose that the human relationship between read and write (rho = 0.617, p = 0.000) is statistically significant.

Simple logistic regression

Logistic regression assumes that the result variable is binary (i.east., coded as 0 and ane). Nosotros accept just 1 variable in the hsb2 data file that is coded 0 and 1, and that is female. Nosotros understand that female person is a featherbrained event variable (it would make more sense to utilize it as a predictor variable), but we tin can use female equally the outcome variable to illustrate how the code for this command is structured and how to interpret the output. The first variable listed after the logistic control is the outcome (or dependent) variable, and all of the rest of the variables are predictor (or independent) variables. In our instance, female volition be the outcome variable, and read will be the predictor variable. As with OLS regression, the predictor variables must be either dichotomous or continuous; they cannot be chiselled.

logistic regression female with read.

The results indicate that reading score (read) is not a statistically pregnant predictor of gender (i.e., being female), Wald = .562, p = 0.453. Likewise, the test of the overall model is non statistically pregnant, LR chi-squared – 0.56, p = 0.453.

Meet also

- Annotated output for logistic regression

- SPSS Library: What kind of contrasts are these?

Multiple regression

Multiple regression is very similar to simple regression, except that in multiple regression you have more than 1 predictor variable in the equation. For example, using the hsb2 data file nosotros will predict writing score from gender (female), reading, math, science and social studies (socst) scores.

regression variable = write female read math scientific discipline socst /dependent = write /method = enter.

The results indicate that the overall model is statistically pregnant (F = 58.60, p = 0.000). Furthermore, all of the predictor variables are statistically meaning except for read.

See also

- Regression with SPSS: Chapter 1 – Simple and Multiple Regression

- Annotated output for regression

- SPSS Oftentimes Asked Questions

- SPSS Textbook Examples: Regression with Graphics, Chapter 3

- SPSS Textbook Examples: Applied Regression Assay

Analysis of covariance

Analysis of covariance is similar ANOVA, except in addition to the categorical predictors you also have continuous predictors too. For example, the one mode ANOVA example used write as the dependent variable and prog as the independent variable. Let'south add read equally a continuous variable to this model, as shown below.

glm write with read past prog.

The results indicate that even after adjusting for reading score (read), writing scores yet significantly differ by program type (prog), F = 5.867, p = 0.003.

See also

- SPSS Textbook Examples from Design and Assay: Chapter xiv

- SPSS Library: An Overview of SPSS GLM

- SPSS Library: How do I handle interactions of continuous and categorical variables?

Multiple logistic regression

Multiple logistic regression is like simple logistic regression, except that at that place are two or more predictors. The predictors can be interval variables or dummy variables, but cannot be categorical variables. If yous have categorical predictors, they should exist coded into one or more dummy variables. We have only one variable in our data set that is coded 0 and ane, and that is female. We understand that female is a airheaded outcome variable (it would brand more sense to utilize it equally a predictor variable), but we can use female person equally the result variable to illustrate how the lawmaking for this command is structured and how to translate the output. The showtime variable listed after the logistic regression command is the outcome (or dependent) variable, and all of the residual of the variables are predictor (or independent) variables (listed after the keyword with). In our example, female volition exist the result variable, and read and write will exist the predictor variables.

logistic regression female with read write.

These results show that both read and write are significant predictors of female.

Come across also

- Annotated output for logistic regression

- SPSS Textbook Examples: Practical Logistic Regression, Affiliate two

- SPSS Code Fragments: Graphing Results in Logistic Regression

Discriminant assay

Discriminant analysis is used when you have one or more normally distributed interval independent variables and a chiselled dependent variable. It is a multivariate technique that considers the latent dimensions in the contained variables for predicting group membership in the categorical dependent variable. For example, using the hsb2 data file, say nosotros wish to utilise read, write and math scores to predict the blazon of program a student belongs to (prog).

discriminate groups = prog(i, 3) /variables = read write math.

Clearly, the SPSS output for this procedure is quite lengthy, and it is beyond the scope of this page to explain all of information technology. Nonetheless, the main point is that two canonical variables are identified by the analysis, the first of which seems to be more related to program type than the second.

See also

- discriminant function analysis

- SPSS Library: A History of SPSS Statistical Features

One-way MANOVA

MANOVA (multivariate analysis of variance) is similar ANOVA, except that at that place are two or more than dependent variables. In a one-manner MANOVA, there is one categorical independent variable and ii or more than dependent variables. For example, using the hsb2 information file, say we wish to examine the differences in read, write and math broken down by program type (prog).

glm read write math past prog.

The students in the different programs differ in their joint distribution of read, write and math.

See likewise

- SPSS Library: Avant-garde Bug in Using and Understanding SPSS MANOVA

- GLM: MANOVA and MANCOVA

- SPSS Library: MANOVA and GLM

Multivariate multiple regression

Multivariate multiple regression is used when you have two or more dependent variables that are to be predicted from 2 or more independent variables. In our case using the hsb2 data file, nosotros will predict write and read from female, math, science and social studies (socst) scores.

glm write read with female math science socst.

These results show that all of the variables in the model take a statistically significant human relationship with the joint distribution of write and read.

Canonical correlation

Canonical correlation is a multivariate technique used to examine the relationship between two groups of variables. For each set of variables, it creates latent variables and looks at the relationships among the latent variables. It assumes that all variables in the model are interval and normally distributed. SPSS requires that each of the two groups of variables be separated past the keyword with. At that place need not be an equal number of variables in the ii groups (before and after the with).

manova read write with math science /discrim. * * * * * * A n a 50 y s i due south o f Five a r i a north c e -- blueprint 1 * * * * * * EFFECT .. Within CELLS Regression Multivariate Tests of Significance (Southward = 2, Chiliad = -ane/ii, N = 97 ) Test Proper name Value Approx. F Hypoth. DF Mistake DF Sig. of F Pillais .59783 41.99694 4.00 394.00 .000 Hotellings 1.48369 72.32964 4.00 390.00 .000 Wilks .40249 56.47060 4.00 392.00 .000 Roys .59728 Note.. F statistic for WILKS' Lambda is exact. - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Event .. Within CELLS Regression (Cont.) Univariate F-tests with (2,197) D. F. Variable Sq. Mul. R Adj. R-sq. Hypoth. MS Error MS F READ .51356 .50862 5371.66966 51.65523 103.99081 WRITE .43565 .42992 3894.42594 51.21839 76.03569 Variable Sig. of F READ .000 WRITE .000 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Raw canonical coefficients for DEPENDENT variables Function No. Variable one READ .063 WRITE .049 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Standardized canonical coefficients for DEPENDENT variables Function No. Variable i READ .649 WRITE .467 * * * * * * A n a fifty y due south i s o f V a r i a n c e -- design ane * * * * * * Correlations between DEPENDENT and approved variables Function No. Variable 1 READ .927 WRITE .854 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Variance in dependent variables explained by canonical variables Can. VAR. Per centum Var DE Cum Percent DE Pct Var CO Cum Percent CO 1 79.441 79.441 47.449 47.449 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Raw canonical coefficients for COVARIATES Function No. COVARIATE 1 MATH .067 Science .048 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Standardized canonical coefficients for COVARIATES CAN. VAR. COVARIATE 1 MATH .628 SCIENCE .478 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Correlations between COVARIATES and canonical variables Can. VAR. Covariate 1 MATH .929 Science .873 * * * * * * A n a l y s i south o f V a r i a north c e -- design 1 * * * * * * Variance in covariates explained by approved variables CAN. VAR. Pct Var DE Cum Percent DE Pct Var CO Cum Pct CO one 48.544 48.544 81.275 81.275 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Regression analysis for WITHIN CELLS fault term --- Individual Univariate .9500 confidence intervals Dependent variable .. READ reading score COVARIATE B Beta Std. Err. t-Value Sig. of t MATH .48129 .43977 .070 6.868 .000 SCIENCE .36532 .35278 .066 v.509 .000 COVARIATE Lower -95% CL- Upper MATH .343 .619 SCIENCE .235 .496 Dependent variable .. WRITE writing score COVARIATE B Beta Std. Err. t-Value Sig. of t MATH .43290 .42787 .070 6.203 .000 Scientific discipline .28775 .30057 .066 iv.358 .000 COVARIATE Lower -95% CL- Upper MATH .295 .571 SCIENCE .158 .418 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - * * * * * * A northward a l y s i due south o f V a r i a n c eastward -- pattern ane * * * * * * EFFECT .. CONSTANT Multivariate Tests of Significance (S = 1, M = 0, Northward = 97 ) Exam Proper name Value Exact F Hypoth. DF Error DF Sig. of F Pillais .11544 12.78959 ii.00 196.00 .000 Hotellings .13051 12.78959 2.00 196.00 .000 Wilks .88456 12.78959 2.00 196.00 .000 Roys .11544 Notation.. F statistics are exact. - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Event .. Abiding (Cont.) Univariate F-tests with (1,197) D. F. Variable Hypoth. SS Mistake SS Hypoth. MS Fault MS F Sig. of F READ 336.96220 10176.0807 336.96220 51.65523 half dozen.52329 .011 WRITE 1209.88188 10090.0231 1209.88188 51.21839 23.62202 .000 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Result .. Abiding (Cont.) Raw discriminant function coefficients Function No. Variable one READ .041 WRITE .124 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Standardized discriminant function coefficients Part No. Variable 1 READ .293 WRITE .889 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - Estimates of effects for approved variables Canonical Variable Parameter 1 one two.196 * * * * * * A n a l y s i southward o f Five a r i a n c due east -- blueprint one * * * * * * EFFECT .. CONSTANT (Cont.) Correlations between DEPENDENT and canonical variables Canonical Variable Variable ane READ .504 WRITE .959 - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -The output above shows the linear combinations respective to the first approved correlation. At the lesser of the output are the two approved correlations. These results bespeak that the first canonical correlation is .7728. The F-test in this output tests the hypothesis that the showtime canonical correlation is equal to zero. Clearly, F = 56.4706 is statistically significant. Nevertheless, the 2nd canonical correlation of .0235 is non statistically significantly unlike from zip (F = 0.1087, p = 0.7420).

Gene assay

Gene analysis is a grade of exploratory multivariate analysis that is used to either reduce the number of variables in a model or to detect relationships among variables. All variables involved in the factor assay need to exist interval and are causeless to be normally distributed. The goal of the analysis is to try to identify factors which underlie the variables. There may be fewer factors than variables, simply there may not be more factors than variables. For our example using the hsb2 data file, allow'southward suppose that nosotros think that there are some common factors underlying the diverse test scores. We volition include subcommands for varimax rotation and a plot of the eigenvalues. We will apply a principal components extraction and volition retain two factors. (Using these options will make our results compatible with those from SAS and Stata and are non necessarily the options that yous volition want to use.)

gene /variables read write math scientific discipline socst /criteria factors(two) /extraction pc /rotation varimax /plot eigen.

Communality (which is the reverse of uniqueness) is the proportion of variance of the variable (i.e., read) that is accounted for by all of the factors taken together, and a very low communality can indicate that a variable may non vest with any of the factors. The scree plot may be useful in determining how many factors to retain. From the component matrix table, we can see that all five of the exam scores load onto the commencement gene, while all five tend to load not so heavily on the second factor. The purpose of rotating the factors is to get the variables to load either very high or very low on each cistron. In this example, because all of the variables loaded onto factor 1 and not on gene ii, the rotation did not assistance in the interpretation. Instead, it made the results even more difficult to interpret.

See also

- SPSS FAQ: What does Cronbach'due south alpha mean?

Source: https://stats.oarc.ucla.edu/spss/whatstat/what-statistical-analysis-should-i-usestatistical-analyses-using-spss/

0 Response to "what type of test should i use to compare a continuous data between three groups"

Post a Comment